8. The OpenMM Library: Introduction¶

8.1. What Is the OpenMM Library?¶

OpenMM consists of two parts. First, there is a set of libraries for performing many types of computations needed for molecular simulations: force evaluation, numerical integration, energy minimization, etc. These libraries provide an interface targeted at developers of simulation software, allowing them to easily add simulation features to their programs.

Second, there is an “application layer”, a set of Python libraries providing a high level interface for running simulations. This layer is targeted at computational biologists or other people who want to run simulations, and who may or may not be programmers.

The first part of this guide focused on the application layer and described how to run simulations with it. We now turn to the lower level libraries. We will assume you are a programmer, that you are writing your own applications, and that you want to add simulation features to those applications. The following chapters describe how to do that with OpenMM.

8.1.1. How to get started¶

We have provided a number of files that make it easy to get started with OpenMM. Pre-compiled binaries are provided for quickly getting OpenMM onto your computer (See Chapter 3 for set-up instructions). We recommend that you then compile and run some of the tutorial examples, described in Chapter 10. These highlight key functions within OpenMM and teach you the basic programming concepts for using OpenMM. Once you are ready to begin integrating OpenMM into a specific software package, read through Chapter 13 to see how other software developers have done this.

8.1.2. License¶

Two different licenses are used for different parts of OpenMM. The public API, the low level API, the reference platform, the CPU platform, and the application layer are all distributed under the MIT license. This is a very permissive license which allows them to be used in almost any way, requiring only that you retain the copyright notice and disclaimer when distributing them.

The CUDA and OpenCL platforms are distributed under the GNU Lesser General Public License (LGPL). This also allows you to use, modify, and distribute them in any way you want, but it requires you to also distribute the source code for your modifications. This restriction applies only to modifications to OpenMM itself; you need not distribute the source code to applications that use it.

OpenMM also uses several pieces of code that were written by other people and are covered by other licenses. All of these licenses are similar in their terms to the MIT license, and do not significantly restrict how OpenMM can be used.

All of these licenses may be found in the “licenses” directory included with OpenMM.

8.2. Design Principles¶

The design of the OpenMM API is guided by the following principles.

- The API must support efficient implementations on a variety of architectures.

The most important consequence of this goal is that the API cannot provide direct access to state information (particle positions, velocities, etc.) at all times. On some architectures, accessing this information is expensive. With a GPU, for example, it will be stored in video memory, and must be transferred to main memory before outside code can access it. On a distributed architecture, it might not even be present on the local computer. OpenMM therefore only allows state information to be accessed in bulk, with the understanding that doing so may be a slow operation.

- The API should be easy to understand and easy to use.

This seems obvious, but it is worth stating as an explicit goal. We are creating OpenMM with the hope that many other people will use it. To achieve that goal, it should be possible for someone to learn it without an enormous amount of effort. An equally important aspect of being “easy to use” is being easy to use correctly. A well designed API should minimize the opportunities for a programmer to make mistakes. For both of these reasons, clarity and simplicity are essential.

- It should be modular and extensible.

We cannot hope to provide every feature any user will ever want. For that reason, it is important that OpenMM be easy to extend. If a user wants to add a new molecular force field, a new thermostat algorithm, or a new hardware platform, the API should make that easy to do.

- The API should be hardware independent.

Computer architectures are changing rapidly, and it is impossible to predict what hardware platforms might be important to support in the future. One of the goals of OpenMM is to separate the API from the hardware. The developers of a simulation application should be able to write their code once, and have it automatically take advantage of any architecture that OpenMM supports, even architectures that do not yet exist when they write it.

8.3. Choice of Language¶

Molecular modeling and simulation tools are written in a variety of languages: C, C++, Fortran, Python, TCL, etc. It is important that any of these tools be able to use OpenMM. There are two possible approaches to achieving this goal.

One option is to provide a separate version of the API for each language. These could be created by hand, or generated automatically with a wrapper generator such as SWIG. This would require the API to use only “lowest common denominator” features that can be reasonably supported in all languages. For example, an object oriented API would not be an option, since it could not be cleanly expressed in C or Fortran.

The other option is to provide a single version of the API written in a single language. This would permit a cleaner, simpler API, but also restrict the languages it could be directly called from. For example, a C++ API could not be invoked directly from Fortran or Python.

We have chosen to use a hybrid of these two approaches. OpenMM is based on an object oriented C++ API. This is the primary way to invoke OpenMM, and is the only API that fully exposes all features of the library. We believe this will ultimately produce the best, easiest to use API and create the least work for developers who use it. It does require that any code which directly invokes this API must itself be written in C++, but this should not be a significant burden. Regardless of what language we had chosen, developers would need to write a thin layer for translating between their own application’s data model and OpenMM. That layer is the only part which needs to be written in C++.

In addition, we have created wrapper APIs that allow OpenMM to be invoked from other languages. The current release includes wrappers for C, Fortran, and Python. These wrappers support as many features as reasonably possible given the constraints of the particular languages, but some features cannot be fully supported. In particular, writing plug-ins to extend the OpenMM API can only be done in C++.

We are also aware that some features of C++ can easily lead to compatibility and portability problems, and we have tried to avoid those features. In particular, we make minimal use of templates and avoid multiple inheritance altogether. Our goal is to support OpenMM on all major compilers and operating systems.

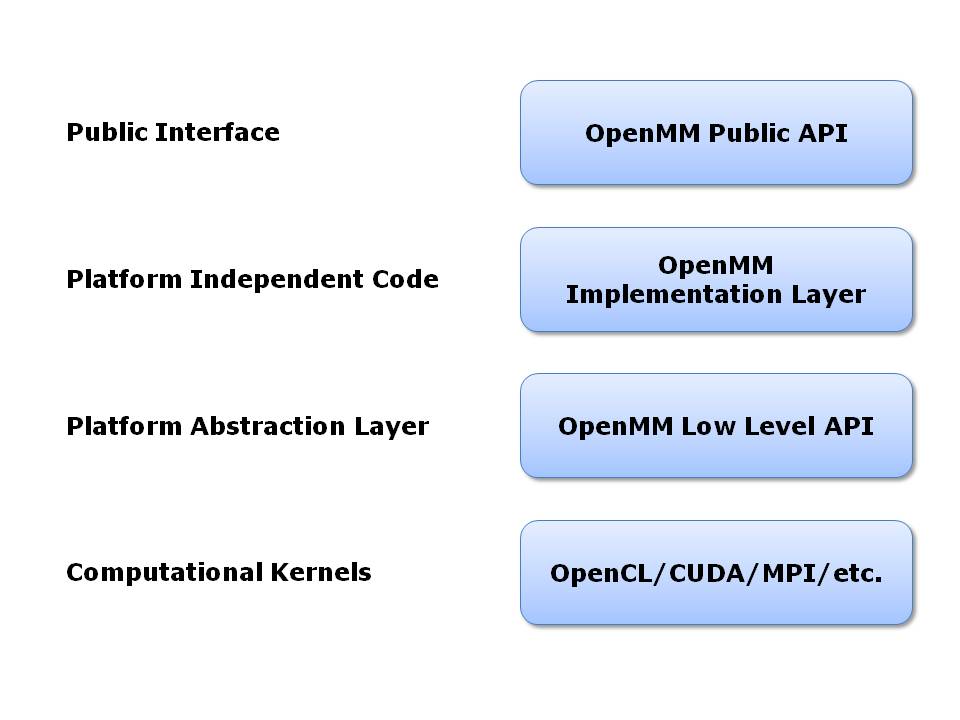

8.4. Architectural Overview¶

OpenMM is based on a layered architecture, as shown in the following diagram:

At the highest level is the OpenMM public API. This is the API developers program against when using OpenMM within their own applications. It is designed to be simple, easy to understand, and completely platform independent. This is the only layer that many users will ever need to look at.

The public API is implemented by a layer of platform independent code. It serves as the interface to the lower level, platform specific code. Most users will never need to look at it.

The next level down is the OpenMM Low Level API (OLLA). This acts as an abstraction layer to hide the details of each hardware platform. It consists of a set of C++ interfaces that each platform must implement. Users who want to extend OpenMM will need to write classes at the OLLA level. Note the different roles played by the public API and the low level API: the public API defines an interface for users to invoke in their own code, while OLLA defines an interface that users must implement, and that is invoked by the OpenMM implementation layer.

At the lowest level is hardware specific code that actually performs computations. This code may be written in any language and use any technologies that are appropriate. For example, code for GPUs will be written in stream processing languages such as OpenCL or CUDA, code written to run on clusters will use MPI or other distributed computing tools, code written for multicore processors will use threading tools such as Pthreads or OpenMP, etc. OpenMM sets no restrictions on how these computational kernels are written. As long as they are wrapped in the appropriate OLLA interfaces, OpenMM can use them.

8.5. The OpenMM Public API¶

The public API is based on a small number of classes:

System: A System specifies generic properties of the system to be simulated: the number of particles it contains, the mass of each one, the size of the periodic box, etc. The interactions between the particles are specified through a set of Force objects (see below) that are added to the System. Force field specific parameters, such as particle charges, are not direct properties of the System. They are properties of the Force objects contained within the System.

Force: The Force objects added to a System define the behavior of the particles. Force is an abstract class; subclasses implement specific behaviors. The Force class is actually slightly more general than its name suggests. A Force can, indeed, apply forces to particles, but it can also directly modify particle positions and velocities in arbitrary ways. Some thermostats and barostats, for example, can be implemented as Force classes. Examples of Force subclasses include HarmonicBondForce, NonbondedForce, and MonteCarloBarostat.

Context: This stores all of the state information for a simulation: particle positions and velocities, as well as arbitrary parameters defined by the Forces in the System. It is possible to create multiple Contexts for a single System, and thus have multiple simulations of that System in progress at the same time.

Integrator: This implements an algorithm for advancing the simulation through time. It is an abstract class; subclasses implement specific algorithms. Examples of Integrator subclasses include LangevinIntegrator, VerletIntegrator, and BrownianIntegrator.

State: A State stores a snapshot of the simulation at a particular point in time. It is created by calling a method on a Context. As discussed earlier, this is a potentially expensive operation. This is the only way to query the values of state variables, such as particle positions and velocities; Context does not provide methods for accessing them directly.

Here is an example of what the source code to create a System and run a simulation might look like:

System system;

for (int i = 0; i < numParticles; ++i)

system.addParticle(particle[i].mass);

HarmonicBondForce* bonds = new HarmonicBondForce();

system.addForce(bonds);

for (int i = 0; i < numBonds; ++i)

bonds->addBond(bond[i].particle1, bond[i].particle2,

bond[i].length, bond[i].k);

HarmonicAngleForce* angles = new HarmonicAngleForce();

system.addForce(angles);

for (int i = 0; i < numAngles; ++i)

angles->addAngle(angle[i].particle1, angle[i].particle2,

angle[i].particle3, angle[i].angle, angle[i].k);

// ...create and initialize other force field terms in the same way

LangevinIntegrator integrator(temperature, friction, stepSize);

Context context(system, integrator);

context.setPositions(initialPositions);

context.setVelocities(initialVelocities);

integrator.step(10000);

We create a System, add various Forces to it, and set parameters on both the System and the Forces. We then create a LangevinIntegrator, initialize a Context in which to run a simulation, and instruct the Integrator to advance the simulation for 10,000 time steps.

8.6. The OpenMM Low Level API¶

The OpenMM Low Level API (OLLA) defines a set of interfaces that users must implement in their own code if they want to extend OpenMM, such as to create a new Force subclass or support a new hardware platform. It is based on the concept of “kernels” that define particular computations to be performed.

More specifically, there is an abstract class called KernelImpl. Instances of this class (or rather, of its subclasses) are created by KernelFactory objects. These classes provide the concrete implementations of kernels for a particular platform. For example, to perform calculations on a GPU, one would create one or more KernelImpl subclasses that implemented the computations with GPU kernels, and one or more KernelFactory subclasses to instantiate the KernelImpl objects.

All of these objects are encapsulated in a single object that extends Platform. KernelFactory objects are registered with the Platform to be used for creating specific named kernels. The choice of what implementation to use (a GPU implementation, a multithreaded CPU implementation, an MPI-based distributed implementation, etc.) consists entirely of choosing what Platform to use.

As discussed so far, the low level API is not in any way specific to molecular simulation; it is a fairly generic computational API. In addition to defining the generic classes, OpenMM also defines abstract subclasses of KernelImpl corresponding to specific calculations. For example, there is a class called CalcHarmonicBondForceKernel to implement HarmonicBondForce and a class called IntegrateLangevinStepKernel to implement LangevinIntegrator. It is these classes for which each Platform must provide a concrete subclass.

This architecture is designed to allow easy extensibility. To support a new hardware platform, for example, you create concrete subclasses of all the abstract kernel classes, then create appropriate factories and a Platform subclass to bind everything together. Any program that uses OpenMM can then use your implementation simply by specifying your Platform subclass as the platform to use.

Alternatively, you might want to create a new Force subclass to implement a new type of interaction. To do this, define an abstract KernelImpl subclass corresponding to the new force, then write the Force class to use it. Any Platform can support the new Force by providing a concrete implementation of your KernelImpl subclass. Furthermore, you can easily provide that implementation yourself, even for existing Platforms created by other people. Simply create a new KernelFactory subclass for your kernel and register it with the Platform object. The goal is to have a completely modular system. Each module, which might be distributed as an independent library, can either add new features to existing platforms or support existing features on new platforms.

In fact, there is nothing “special” about the kernel classes defined by OpenMM. They are simply KernelImpl subclasses that happen to be used by Forces and Integrators that happen to be bundled with OpenMM. They are treated exactly like any other KernelImpl, including the ones you define yourself.

It is important to understand that OLLA defines an interface, not an implementation. It would be easy to assume a one-to-one correspondence between KernelImpl objects and the pieces of code that actually perform calculations, but that need not be the case. For a GPU implementation, for example, a single KernelImpl might invoke several GPU kernels. Alternatively, a single GPU kernel might perform the calculations of several KernelImpl subclasses.

8.7. Platforms¶

This release of OpenMM contains the following Platform subclasses:

ReferencePlatform: This is designed to serve as reference code for writing other platforms. It is written with simplicity and clarity in mind, not performance.

CpuPlatform: This platform provides high performance when running on conventional CPUs.

CudaPlatform: This platform is implemented using the CUDA language, and performs calculations on Nvidia GPUs.

OpenCLPlatform: This platform is implemented using the OpenCL language, and performs calculations on a variety of types of GPUs and CPUs.

The choice of which platform to use for a simulation depends on various factors:

- The Reference platform is much slower than the others, and therefore is rarely used for production simulations.

- The CPU platform is usually the fastest choice when a fast GPU is not available. However, it requires the CPU to support SSE 4.1. That includes most CPUs made in the last several years, but this platform may not be available on some older computers. Also, for simulations that use certain features (primarily the various “custom” force classes), it may be faster to use the OpenCL platform running on the CPU.

- The CUDA platform can only be used with NVIDIA GPUs. For using an AMD or Intel GPU, use the OpenCL platform.

- When running on recent NVIDIA GPUs (Fermi and Kepler generations), the CUDA platform is usually faster and should be used. On older GPUs, the OpenCL platform is likely to be faster. Also, some very old GPUs (GeForce 8000 and 9000 series) are only supported by the OpenCL platform, not by the CUDA platform.

- The AMOEBA force field only works with the CUDA platform, not with the OpenCL platform. It also works with the Reference and CPU platforms, but the performance is usually too slow to be useful on those platforms.

9. Compiling OpenMM from Source Code¶

This chapter describes the procedure for building and installing OpenMM libraries from source code. It is recommended that you use binary OpenMM libraries, if possible. If there are not suitable binary libraries for your system, consider building OpenMM from source code by following these instructions.

9.1. Prerequisites¶

Before building OpenMM from source, you will need the following:

- A C++ compiler

- CMake

- OpenMM source code

See the sections below for specific instructions for the different platforms.

9.1.1. Get a C++ compiler¶

You must have a C++ compiler installed before attempting to build OpenMM from source.

9.1.1.1. Mac and Linux: clang or gcc¶

Use clang or gcc on Mac/Linux. OpenMM should compile correctly with all recent versions of these compilers. We recommend clang since it produces faster code, especially when using the CPU platform.

If you do not already have a compiler installed, you will need to download and install it. On Mac OS X, this means downloading the Xcode Tools from the App Store. (With Xcode 4.3, you must then launch Xcode, open the Preferences window, go to the Downloads tab, and tell it to install the command line tools. With Xcode 4.2 and earlier, the command line tools are automatically installed when you install Xcode.)

9.1.1.2. Windows: Visual Studio¶

On Windows systems, use the C++ compiler in Visual Studio version 10 (2010) or later. You can download a free version of Visual C++ Express Edition from http://www.microsoft.com/express/vc/. If you plan to use use OpenMM from Python, it is critical that both OpenMM and Python be compiled with the same version of Visual Studio.

9.1.2. Install CMake¶

CMake is the build system used for OpenMM. You must install CMake version 2.8 or higher before attempting to build OpenMM from source. You can get CMake from http://www.cmake.org/. If you choose to build CMake from source on Linux, make sure you have the curses library installed beforehand, so that you will be able to build the CCMake visual CMake tool.

9.1.3. Get the OpenMM source code¶

You will also need the OpenMM source code before building OpenMM from source. To download and unpack OpenMM source code:

- Browse to https://simtk.org/home/openmm.

- Click the “Downloads” link in the navigation bar on the left side.

- Download OpenMM<Version>-Source.zip, choosing the latest version.

- Unpack the zip file. Note the location where you unpacked the OpenMM source code.

Alternatively, if you want the most recent development version of the code rather than the version corresponding to a particular release, you can get it from https://github.com/pandegroup/openmm. Be aware that the development code is constantly changing, may contain bugs, and should never be used for production work. If you want a stable, well tested version of OpenMM, you should download the source code for the latest release as described above.

9.1.4. Other Required Software¶

There are several other pieces of software you must install to compile certain parts of OpenMM. Which of these you need depends on the options you select in CMake.

For compiling the CUDA Platform, you need:

- CUDA (See Chapter 3 for installation instructions.)

For compiling the OpenCL Platform, you need:

- OpenCL (See Chapter 3 for installation instructions.)

For compiling C and Fortran API wrappers, you need:

- Python 2.6 or later (http://www.python.org)

- Doxygen (http://www.doxygen.org)

- A Fortran compiler

For compiling the Python API wrappers, you need:

- Python 2.6 or later (http://www.python.org)

- SWIG (http://www.swig.org)

- Doxygen (http://www.doxygen.org)

For compiling the CPU platform, you need:

- FFTW, single precision multithreaded version (http://www.fftw.org)

To generate API documentation, you need:

- Doxygen (http://www.doxygen.org)

9.2. Step 1: Configure with CMake¶

9.2.1. Build and source directories¶

First, create a directory in which to build OpenMM. A good name for this directory is build_openmm. We will refer to this as the “build_openmm directory” in the instructions below. This directory will contain the temporary files used by the OpenMM CMake build system. Do not create this build directory within the OpenMM source code directory. This is what is called an “out of source” build, because the build files will not be mixed with the source files.

Also note the location of the OpenMM source directory (i.e., where you unpacked the source code zip file). It should contain a file called CMakeLists.txt. This directory is what we will call the “OpenMM source directory” in the following instructions.

9.2.2. Starting CMake¶

Configuration is the first step of the CMake build process. In the configuration step, the values of important build variables will be established.

9.2.2.1. Mac and Linux¶

On Mac and Linux machines, type the following two lines:

cd build_openmm

ccmake -i <path to OpenMM src directory>

That is not a typo. ccmake has two c’s. CCMake is the visual CMake configuration tool. Press “c” within the CCMake interface to configure CMake. Follow the instructions in the “All Platforms” section below.

9.2.2.2. Windows¶

On Windows, perform the following steps:

- Click Start->All Programs->CMake 2.8->CMake

- In the box labeled “Where is the source code:” browse to OpenMM src directory (containing top CMakeLists.txt)

- In the box labeled “Where to build the binaries” browse to your build_openmm directory.

- Click the “Configure” button at the bottom of the CMake screen.

- Select “Visual Studio 10 2010” from the list of Generators (or whichever version you have installed)

- Follow the instructions in the “All Platforms” section below.

9.2.2.3. All platforms¶

There are several variables that can be adjusted in the CMake interface:

- If you intend to use CUDA (NVIDIA) or OpenCL acceleration, set the variable OPENMM_BUILD_CUDA_LIB or OPENMM_BUILD_OPENCL_LIB, respectively, to ON. Before doing so, be certain that you have installed and tested the drivers for the platform you have selected (see Chapter 3 for information on installing GPU software).

- There are lots of other options starting with OPENMM_BUILD that control whether to build particular features of OpenMM, such as plugins, API wrappers, and documentation.

- Set the variable CMAKE_INSTALL_PREFIX to the location where you want to install OpenMM.

Configure (press “c”) again. Adjust any variables that cause an error.

Continue to configure (press “c”) until no starred/red CMake variables are displayed. Congratulations, you have completed the configuration step.

9.3. Step 2: Generate Build Files with CMake¶

Once the configuration is done, the next step is generation. The generate “g” or “OK” or “Generate” option will not be available until configuration has completely converged.

9.3.1. Windows¶

- Press the “OK” or “Generate” button to generate Visual Studio project files.

- If CMake does not exit automatically, press the close button in the upper- right corner of the CMake title bar to exit.

9.3.2. Mac and Linux¶

- Press “g” to generate the Makefile.

- If CMake does not exit automatically, press “q” to exit.

That’s it! Generation is the easy part. Now it’s time to build.

9.4. Step 3: Build OpenMM¶

9.4.1. Windows¶

- Open the file OpenMM.sln in your openmm_build directory in Visual Studio.

- Set the configuration type to “Release” (not “Debug”) in the toolbar.

- From the Build menu, click Build->Build Solution

- The OpenMM libraries and test programs will be created. This takes some time.

- The test program TestCudaRandom might not build on Windows. This is OK.

9.4.2. Mac and Linux¶

- Type make in the openmm_build directory.

The OpenMM libraries and test programs will be created. This takes some time.

9.5. Step 4: Install OpenMM¶

9.5.1. Windows¶

In the Solution Explorer Panel, far-click/right-click INSTALL->build.

9.5.2. Mac and Linux¶

Type:

make install

If you are installing to a system area, such as /usr/local/openmm/, you will need to type:

sudo make install

9.6. Step 5: Install the Python API¶

9.6.1. Windows¶

In the Solution Explorer Panel, right-click PythonInstall->build.

9.6.2. Mac and Linux¶

Type:

make PythonInstall

If you are installing into the system Python, such as /usr/bin/python, you will need to type:

sudo make PythonInstall

9.7. Step 6: Test your build¶

After OpenMM has been built, you should run the unit tests to make sure it works.

9.7.1. Windows¶

In Visual Studio, far-click/right-click RUN_TESTS in the Solution Explorer Panel. Select RUN_TESTS->build to begin testing. Ignore any failures for TestCudaRandom.

9.7.2. Mac and Linux¶

Type:

make test

You should see a series of test results like this:

Start 1: TestReferenceAndersenThermostat

1/317 Test #1: TestReferenceAndersenThermostat .............. Passed 0.26 sec

Start 2: TestReferenceBrownianIntegrator

2/317 Test #2: TestReferenceBrownianIntegrator .............. Passed 0.13 sec

Start 3: TestReferenceCheckpoints

3/317 Test #3: TestReferenceCheckpoints ..................... Passed 0.02 sec

... <many other tests> ...

Passed is good. FAILED is bad. If any tests fail, you can run them individually to get more detailed error information. Note that some tests are stochastic, and therefore are expected to fail a small fraction of the time. These tests will say so in the error message:

./TestReferenceLangevinIntegrator

exception: Assertion failure at TestReferenceLangevinIntegrator.cpp:129. Expected 9.97741,

found 10.7884 (This test is stochastic and may occasionally fail)

Congratulations! You successfully have built and installed OpenMM from source.

10. OpenMM Tutorials¶

10.1. Example Files Overview¶

Four example files are provided in the examples folder, each designed with a specific objective.

- HelloArgon: A very simple example intended for verifying that you have installed OpenMM correctly. It also introduces you to the basic classes within OpenMM.

- HelloSodiumChloride: This example shows you our recommended strategy for integrating OpenMM into an existing molecular dynamics code.

- HelloEthane: The main purpose of this example is to demonstrate how to tell OpenMM about bonded forces (bond stretch, bond angle bend, dihedral torsion).

- HelloWaterBox: This example shows you how to use OpenMM to model explicit solvation, including setting up periodic boundary conditions. It runs extremely fast on a GPU but very, very slowly on a CPU, so it is an excellent example to use to compare performance on the GPU versus the CPU. The other examples provided use systems where the performance difference would be too small to notice.

The two fundamental examples—HelloArgon and HelloSodiumChloride—are provided in C++, C, and Fortran, as indicated in the table below. The other two examples—HelloEthane and HelloWaterBox—follow the same structure as HelloSodiumChloride but demonstrate more calls within the OpenMM API. They are only provided in C++ but can be adapted to run in C and Fortran by following the mappings described in Chapter 12. HelloArgon and HelloSodiumChloride also serve as examples of how to do these mappings. The sections below describe the HelloArgon, HelloSodiumChloride, and HelloEthane programs in more detail.

| Example | Solvent | Thermostat | Boundary | Forces & Constraints | API |

|---|---|---|---|---|---|

| Argon | Vacuum | None | None | Non-bonded* | C++, C, Fortran |

| Sodium Chloride | Implicit water | Langevin | None | Non-bonded* | C++, C, Fortran |

| Ethane | Vacuum | None | None | Non-bonded*, stretch, bend, torsion | C++ |

| Water Box | Explicit water | Andersen | Periodic | Non-bonded*, stretch, bend, constraints | C++ |

*van der Waals and Coulomb forces

10.2. Running Example Files¶

The instructions below are for running the HelloArgon program. A similar process would be used to run the other examples.

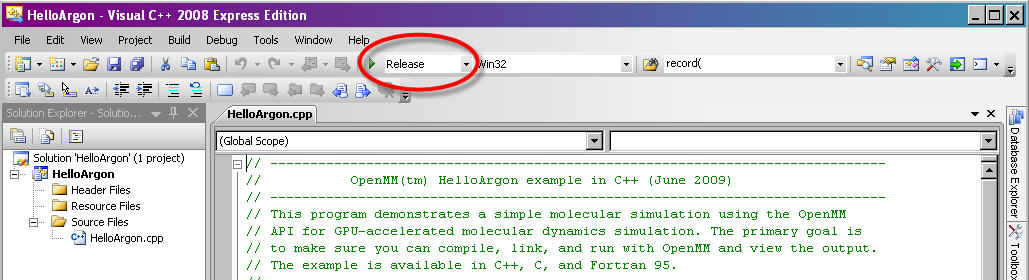

10.2.1. Visual Studio¶

Navigate to wherever you saved the example files. Descend into the directory folder VisualStudio. Double-click the file HelloArgon.sln (a Microsoft Visual Studio Solution file). Visual Studio will launch.

Note: These files were created using Visual Studio 8. If you are using a more recent version, it will ask if you want to convert the files to the new version. Agree and continue through the conversion process.

In Visual Studio, make sure the “Solution Configuration” is set to “Release” and not “Debug”. The “Solution Configuration” can be set using the drop-down menu in the top toolbar, next to the green arrow (see Figure 10-1 below). Due to incompatibilities among Visual Studio versions, we do not provide pre-compiled debug binaries.

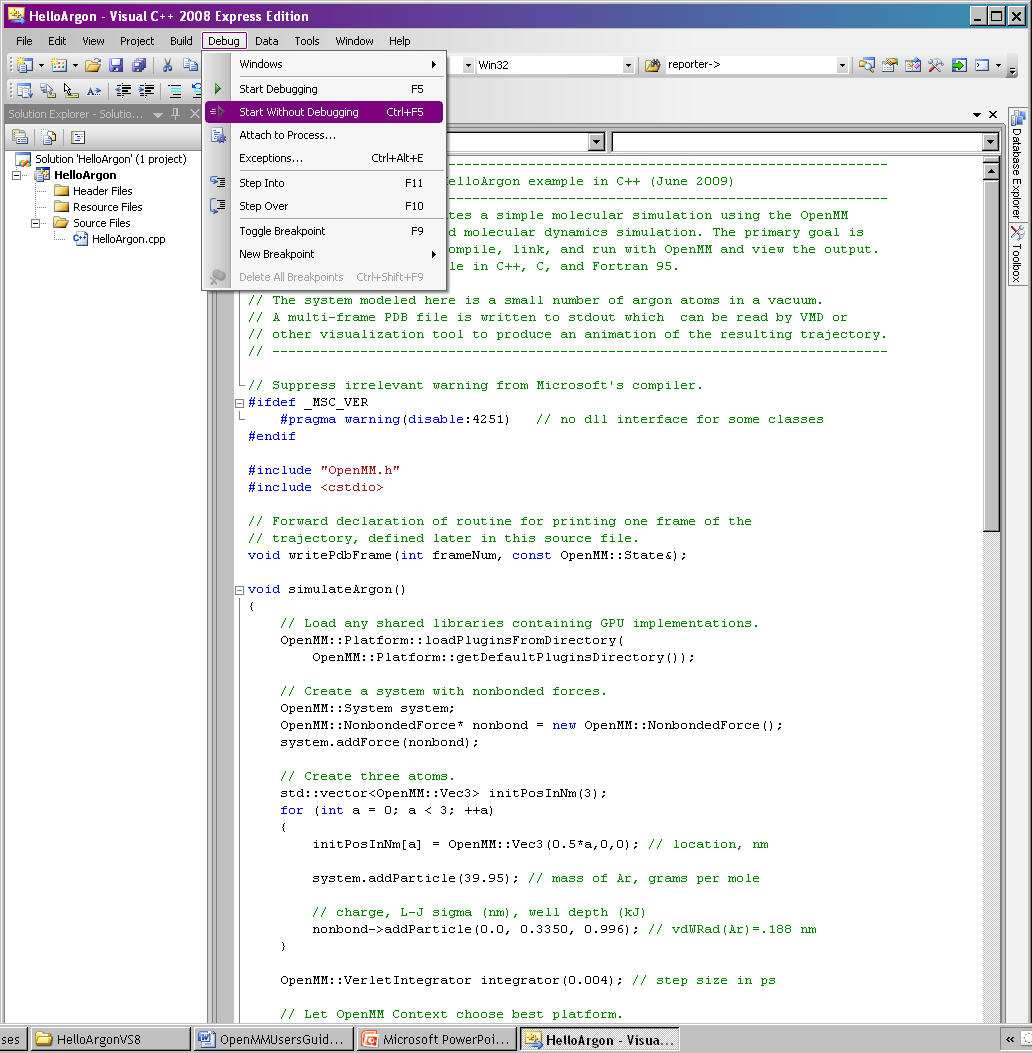

From the command options select Debug -> Start Without Debugging (or CTRL-F5). See Figure 10-2. This will also compile the program, if it has not previously been compiled.

You should see a series of lines like the following output on your screen:

REMARK Using OpenMM platform Reference

MODEL 1

ATOM 1 AR AR 1 0.000 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.000 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 10.000 0.000 0.000 1.00 0.00

ENDMDL

…

MODEL 250

ATOM 1 AR AR 1 0.233 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.068 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.678 0.000 0.000 1.00 0.00

ENDMDL

MODEL 251

ATOM 1 AR AR 1 0.198 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.082 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.698 0.000 0.000 1.00 0.00

ENDMDL

MODEL 252

ATOM 1 AR AR 1 0.165 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.097 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.717 0.000 0.000 1.00 0.00

ENDMDL

10.2.1.1. Determining the platform being used¶

The very first line of the output will indicate whether you are running on the CPU (Reference platform) or a GPU (CUDA or OpenCL platform). It will say one of the following:

REMARK Using OpenMM platform Reference

REMARK Using OpenMM platform Cuda

REMARK Using OpenMM platform OpenCL

If you have a supported GPU, the program should, by default, run on the GPU.

10.2.1.2. Visualizing the results¶

You can output the results to a PDB file that could be visualized using programs like VMD (http://www.ks.uiuc.edu/Research/vmd/) or PyMol (http://pymol.sourceforge.net/). To do this within Visual Studios:

Right-click on the project name HelloArgon (not one of the files) and select the “Properties” option.

On the “Property Pages” form, select “Debugging” under the “Configuration Properties” node.

In the “Command Arguments” field, type:

> argon.pdb

This will save the output to a file called argon.pdb in the current working directory (default is the VisualStudio directory). If you want to save it to another directory, you will need to specify the full path.

Select “OK”

Now, when you run the program in Visual Studio, no text will appear. After a short time, you should see the message “Press any key to continue…,” indicating that the program is complete and that the PDB file has been completely written.

10.2.2. Mac OS X/Linux¶

Navigate to wherever you saved the example files.

Verify your makefile by consulting the MakefileNotes file in this directory, if necessary.

Type::

make

Then run the program by typing:

./HelloArgon

You should see a series of lines like the following output on your screen:

REMARK Using OpenMM platform Reference

MODEL 1

ATOM 1 AR AR 1 0.000 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.000 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 10.000 0.000 0.000 1.00 0.00

ENDMDL

...

MODEL 250

ATOM 1 AR AR 1 0.233 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.068 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.678 0.000 0.000 1.00 0.00

ENDMDL

MODEL 251

ATOM 1 AR AR 1 0.198 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.082 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.698 0.000 0.000 1.00 0.00

ENDMDL

MODEL 252

ATOM 1 AR AR 1 0.165 0.000 0.000 1.00 0.00

ATOM 2 AR AR 1 5.097 0.000 0.000 1.00 0.00

ATOM 3 AR AR 1 9.717 0.000 0.000 1.00 0.00

ENDMDL

10.2.2.1. Determining the platform being used¶

The very first line of the output will indicate whether you are running on the CPU (Reference platform) or a GPU (CUDA or OpenCL platform). It will say one of the following:

REMARK Using OpenMM platform Reference

REMARK Using OpenMM platform Cuda

REMARK Using OpenMM platform OpenCL

If you have a supported GPU, the program should, by default, run on the GPU.

10.2.2.2. Visualizing the results¶

You can output the results to a PDB file that could be visualized using programs like VMD (http://www.ks.uiuc.edu/Research/vmd/) or PyMol (http://pymol.sourceforge.net/) by typing:

./HelloArgon > argon.pdb

10.2.2.3. Compiling Fortran and C examples¶

The Makefile provided with the examples can also be used to compile the Fortran and C examples.

The Fortran compiler needs to load a version of the libstdc++.dylib library that is compatible with the version of gcc used to build OpenMM; OpenMM for Mac is compiled using gcc 4.2. If you are compiling with a different version, edit the Makefile and add the following flag to FCPPLIBS: –L/usr/lib/gcc/i686 -apple-darwin10/4.2.1.

When the Makefile has been updated, type:

make all

10.3. HelloArgon Program¶

The HelloArgon program simulates three argon atoms in a vacuum. It is a simple program primarily intended for you to verify that you are able to compile, link, and run with OpenMM. It also demonstrates the basic calls needed to run a simulation using OpenMM.

10.3.1. Including OpenMM-defined functions¶

The OpenMM header file OpenMM.h instructs the program to include everything defined by the OpenMM libraries. Include the header file by adding the following line at the top of your program:

#include "OpenMM.h"

10.3.2. Running a program on GPU platforms¶

By default, a program will run on the Reference platform. In order to run a program on another platform (e.g., an NVIDIA or AMD GPU), you need to load the required shared libraries for that other platform (e.g., Cuda, OpenCL). The easy way to do this is to call:

OpenMM::Platform::loadPluginsFromDirectory(OpenMM::Platform::getDefaultPluginsDirectory());

This will load all the shared libraries (plug-ins) that can be found, so you do not need to explicitly know which libraries are available on a given machine. In this way, the program will be able to run on another platform, if it is available.

10.3.3. Running a simulation using the OpenMM public API¶

The OpenMM public API was described in Section 8.5. Here you will see how to use those classes to create a simple system of three argon atoms and run a short simulation. The main components of the simulation are within the function simulateArgon():

System – We first establish a system and add a non-bonded force to it. At this point, there are no particles in the system.

// Create a system with nonbonded forces. OpenMM::System system; OpenMM::NonbondedForce* nonbond = new OpenMM::NonbondedForce(); system.addForce(nonbond);

We then add the three argon atoms to the system. For this system, all the data for the particles are hard-coded into the program. While not a realistic scenario, it makes the example simpler and clearer. The std::vector<OpenMM::Vec3> is an array of vectors of 3.

// Create three atoms. std::vector<OpenMM::Vec3> initPosInNm(3); for (int a = 0; a < 3; ++a) { initPosInNm[a] = OpenMM::Vec3(0.5*a,0,0); // location, nm system.addParticle(39.95); // mass of Ar, grams per mole // charge, L-J sigma (nm), well depth (kJ) nonbond->addParticle(0.0, 0.3350, 0.996); // vdWRad(Ar)=.188 nm }

Units: Be very careful with the units in your program. It is very easy to make mistakes with the units, so we recommend including them in your variable names, as we have done here initPosInNm (position in nanometers). OpenMM provides conversion constants that should be used whenever there are conversions to be done; for simplicity, we did not do that in HelloArgon, but all the other examples show the use of these constants.

It is hard to overemphasize the importance of careful units handling—it is very easy to make a mistake despite, or perhaps because of, the trivial nature of units conversion. For more information about the units used in OpenMM, see Section 18.2.

Adding Particle Information: Both the system and the non-bonded force require information about the particles. The system just needs to know the mass of the particle. The non-bonded force requires information about the charge (in this case, argon is uncharged), and the Lennard-Jones parameters sigma (zero-energy separation distance) and well depth (see Section 19.6.1 for more details).

Note that the van der Waals radius for argon is 0.188 nm and that it has already been converted to sigma (0.335 nm) in the example above where it is added to the non-bonded force; in your code, you should make use of the appropriate conversion factor supplied with OpenMM as discussed in Section 18.2.

Integrator – We next specify the integrator to use to perform the calculations. In this case, we choose a Verlet integrator to run a constant energy simulation. The only argument required is the step size in picoseconds.

OpenMM::VerletIntegrator integrator(0.004); // step size in ps

We have chosen to use 0.004 picoseconds, or 4 femtoseconds, which is larger than that used in a typical molecular dynamics simulation. However, since this example does not have any bonds with higher frequency components, like most molecular dynamics simulations do, this is an acceptable value.

Context – The context is an object that consists of an integrator and a system. It manages the state of the simulation. The code below initializes the context. We then let the context select the best platform available to run on, since this is not specifically specified, and print out the chosen platform. This is useful information, especially when debugging.

// Let OpenMM Context choose best platform. OpenMM::Context context(system, integrator); printf("REMARK Using OpenMM platform %s\n", context.getPlatform().getName().c_str());

We then initialize the system, setting the initial time, as well as the initial positions and velocities of the atoms. In this example, we leave time and velocity at their default values of zero.

// Set starting positions of the atoms. Leave time and velocity zero. context.setPositions(initPosInNm);

Initialize and run the simulation – The next block of code runs the simulation and saves its output. For each frame of the simulation (in this example, a frame is defined by the advancement interval of the integrator; see below), the current state of the simulation is obtained and written out to a PDB-formatted file.

// Simulate. for (int frameNum=1; ;++frameNum) { // Output current state information. OpenMM::State state = context.getState(OpenMM::State::Positions); const double timeInPs = state.getTime(); writePdbFrame(frameNum, state); // output coordinates

Getting state information has to be done in bulk, asking for information for all the particles at once. This is computationally expensive since this information can reside on the GPUs and requires communication overhead to retrieve, so you do not want to do it very often. In the above code, we only request the positions, since that is all that is needed, and time from the state.

The simulation stops after 10 ps; otherwise we ask the integrator to take 10 steps (so one frame is equivalent to 10 time steps). Normally, we would want to take more than 10 steps at a time, but to get a reasonable-looking animation, we use 10.

if (timeInPs >= 10.) break; // Advance state many steps at a time, for efficient use of OpenMM. integrator.step(10); // (use a lot more than this normally)

10.3.4. Error handling for OpenMM¶

Error handling for OpenMM is explicitly designed so you do not have to check the status after every call. If anything goes wrong, OpenMM throws an exception. It uses standard exceptions, so on many platforms, you will get the exception message automatically. However, we recommend using try-catch blocks to ensure you do catch the exception.

int main()

{

try {

simulateArgon();

return 0; // success!

}

// Catch and report usage and runtime errors detected by OpenMM and fail.

catch(const std::exception& e) {

printf("EXCEPTION: %s\n", e.what());

return 1; // failure!

}

}

10.3.5. Writing out PDB files¶

For the HelloArgon program, we provide a simple PDB file writing function writePdbFrame that only writes out argon atoms. The function has nothing to do with OpenMM except for using the OpenMM State. The function extracts the positions from the State in nanometers (10-9 m) and converts them to Angstroms (10-10 m) to be compatible with the PDB format. Again, we emphasize how important it is to track the units being used!

void writePdbFrame(int frameNum, const OpenMM::State& state)

{

// Reference atomic positions in the OpenMM State.

const std::vector<OpenMM::Vec3>& posInNm = state.getPositions();

// Use PDB MODEL cards to number trajectory frames

printf("MODEL %d\n", frameNum); // start of frame

for (int a = 0; a < (int)posInNm.size(); ++a)

{

printf("ATOM %5d AR AR 1 ", a+1); // atom number

printf("%8.3f%8.3f%8.3f 1.00 0.00\n", // coordinates

// "*10" converts nanometers to Angstroms

posInNm[a][0]*10, posInNm[a][1]*10, posInNm[a][2]*10);

}

printf("ENDMDL\n"); // end of frame

}

MODEL and ENDMDL are used to mark the beginning and end of a frame, respectively. By including multiple frames in a PDB file, you can visualize the simulation trajectory.

10.3.6. HelloArgon output¶

The output of the HelloArgon program can be saved to a .pdb file and visualized using programs like VMD or PyMol (see Section 10.2). You should see three atoms moving linearly away and towards one another:

You may need to adjust the van der Waals radius in your visualization program to see the atoms colliding.

10.4. HelloSodiumChloride Program¶

The HelloSodiumChloride models several sodium (Na+) and chloride (Cl-) ions in implicit solvent (using a Generalized Born/Surface Area, or GBSA, OBC model). As with the HelloArgon program, only non-bonded forces are simulated.

The main purpose of this example is to illustrate our recommended strategy for integrating OpenMM into an existing molecular dynamics (MD) code:

- Write a few, high-level interface routines containing all your OpenMM calls: Rather than make OpenMM calls throughout your program, we recommend writing a handful of interface routines that understand both your MD code’s data structures and OpenMM. Organize these routines into a separate compilation unit so you do not have to make huge changes to your existing MD code. These routines could be written in any language that is callable from the existing MD code. We recommend writing them in C++ since that is what OpenMM is written in, but you can also write them in C or Fortran; see Chapter 12.

- Call only these high-level interface routines from your existing MD code: This provides a clean separation between the existing MD code and OpenMM, so that changes to OpenMM will not directly impact the existing MD code. One way to implement this is to use opaque handles, a standard trick used (for example) for opening files in Linux. An existing MD code can communicate with OpenMM via the handle, but knows none of the details of the handle. It only has to hold on to the handle and give it back to OpenMM.

In the example described below, you will see how this strategy can be implemented for a very simple MD code. Chapter 13 describes the strategies used in integrating OpenMM into real MD codes.

10.4.1. Simple molecular dynamics system¶

The initial sections of HelloSodiumChloride.cpp represent a very simple molecular dynamics system. The system includes modeling and simulation parameters and the atom and force field data. It also provides a data structure posInAng[3] for storing the current state. These sections represent (in highly simplified form) information that would be available from an existing MD code, and will be used to demonstrate how to integrate OpenMM with an existing MD program.

// -----------------------------------------------------------------

// MODELING AND SIMULATION PARAMETERS

// -----------------------------------------------------------------

static const double Temperature = 300; // Kelvins

static const double FrictionInPerPs = 91.; // collisions per picosecond

static const double SolventDielectric = 80.; // typical for water

static const double SoluteDielectric = 2.; // typical for protein

static const double StepSizeInFs = 2; // integration step size (fs)

static const double ReportIntervalInFs = 50; // how often to issue PDB frame (fs)

static const double SimulationTimeInPs = 100; // total simulation time (ps)

// Decide whether to request energy calculations.

static const bool WantEnergy = true;

// -----------------------------------------------------------------

// ATOM AND FORCE FIELD DATA

// -----------------------------------------------------------------

// This is not part of OpenMM; just a struct we can use to collect atom

// parameters for this example. Normally atom parameters would come from the

// force field's parameterization file. We're going to use data in Angstrom and

// Kilocalorie units and show how to safely convert to OpenMM's internal unit

// system which uses nanometers and kilojoules.

static struct MyAtomInfo {

const char* pdb;

double mass, charge, vdwRadiusInAng, vdwEnergyInKcal,

gbsaRadiusInAng, gbsaScaleFactor;

double initPosInAng[3];

double posInAng[3]; // leave room for runtime state info

} atoms[] = {

// pdb mass charge vdwRad vdwEnergy gbsaRad gbsaScale initPos

{" NA ", 22.99, 1, 1.8680, 0.00277, 1.992, 0.8, 8, 0, 0},

{" CL ", 35.45, -1, 2.4700, 0.1000, 1.735, 0.8, -8, 0, 0},

{" NA ", 22.99, 1, 1.8680, 0.00277, 1.992, 0.8, 0, 9, 0},

{" CL ", 35.45, -1, 2.4700, 0.1000, 1.735, 0.8, 0,-9, 0},

{" NA ", 22.99, 1, 1.8680, 0.00277, 1.992, 0.8, 0, 0,-10},

{" CL ", 35.45, -1, 2.4700, 0.1000, 1.735, 0.8, 0, 0, 10},

{""} // end of list

};

10.4.2. Interface routines¶

The key to our recommended integration strategy is the interface routines. You will need to decide what interface routines are required for effective communication between your existing MD program and OpenMM, but typically there will only be six or seven. In our example, the following four routines suffice:

- Initialize: Data structures that already exist in your MD program (i.e., force fields, constraints, atoms in the system) are passed to the Initialize routine, which makes appropriate calls to OpenMM and then returns a handle to the OpenMM object that can be used by the existing MD program.

- Terminate: Clean up the heap space allocated by Initialize by passing the handle to the Terminate routine.

- Advance State: The AdvanceState routine advances the simulation. It requires that the calling function, the existing MD code, gives it a handle.

- Retrieve State: When you want to do an analysis or generate some kind of report, you call the RetrieveState routine. You have to give it a handle. It then fills in a data structure that is defined in the existing MD code, allowing the MD program to use it in its existing routines without further modification.

Note that these are just descriptions of the routines’ functions—you can call them anything you like and implement them in whatever way makes sense for your MD code.

In the example code, the four routines performing these functions, plus an opaque data structure (the handle), would be declared, as shown below. Then, the main program, which sets up, runs, and reports on the simulation, accesses these routines and the opaque data structure (in this case, the variable omm). As you can see, it does not have access to any OpenMM declarations, only to the interface routines that you write so there is no need to change the build environment.

struct MyOpenMMData;

static MyOpenMMData* myInitializeOpenMM(const MyAtomInfo atoms[],

double temperature,

double frictionInPs,

double solventDielectric,

double soluteDielectric,

double stepSizeInFs,

std::string& platformName);

static void myStepWithOpenMM(MyOpenMMData*, int numSteps);

static void myGetOpenMMState(MyOpenMMData*,

bool wantEnergy,

double& time,

double& energy,

MyAtomInfo atoms[]);

static void myTerminateOpenMM(MyOpenMMData*);

// -----------------------------------------------------------------

// MAIN PROGRAM

// -----------------------------------------------------------------

int main() {

const int NumReports = (int)(SimulationTimeInPs*1000 / ReportIntervalInFs + 0.5);

const int NumSilentSteps = (int)(ReportIntervalInFs / StepSizeInFs + 0.5);

// ALWAYS enclose all OpenMM calls with a try/catch block to make sure that

// usage and runtime errors are caught and reported.

try {

double time, energy;

std::string platformName;

// Set up OpenMM data structures; returns OpenMM Platform name.

MyOpenMMData* omm = myInitializeOpenMM(atoms, Temperature, FrictionInPerPs,

SolventDielectric, SoluteDielectric, StepSizeInFs, platformName);

// Run the simulation:

// (1) Write the first line of the PDB file and the initial configuration.

// (2) Run silently entirely within OpenMM between reporting intervals.

// (3) Write a PDB frame when the time comes.

printf("REMARK Using OpenMM platform %s\n", platformName.c_str());

myGetOpenMMState(omm, WantEnergy, time, energy, atoms);

myWritePDBFrame(1, time, energy, atoms);

for (int frame=2; frame <= NumReports; ++frame) {

myStepWithOpenMM(omm, NumSilentSteps);

myGetOpenMMState(omm, WantEnergy, time, energy, atoms);

myWritePDBFrame(frame, time, energy, atoms);

}

// Clean up OpenMM data structures.

myTerminateOpenMM(omm);

return 0; // Normal return from main.

}

// Catch and report usage and runtime errors detected by OpenMM and fail.

catch(const std::exception& e) {

printf("EXCEPTION: %s\n", e.what());

return 1;

}

}

We will examine the implementation of each of the four interface routines and the opaque data structure (handle) in the sections below.

10.4.2.1. Units¶

The simple molecular dynamics system described in Section 10.4.1 employs the commonly used units of angstroms and kcals. These differ from the units and parameters used within OpenMM (see Section 18.2): nanometers and kilojoules. These differences may be small but they are critical and must be carefully accounted for in the interface routines.

10.4.2.2. Lennard-Jones potential¶

The Lennard-Jones potential describes the energy between two identical atoms as the distance between them varies.

The van der Waals “size” parameter is used to identify the distance at which the energy between these two atoms is at a minimum (that is, where the van der Waals force is most attractive). There are several ways to specify this parameter, typically, either as the van der Waals radius rvdw or as the actual distance between the two atoms dmin (also called rmin), which is twice the van der Waals radius rvdw. A third way to describe the potential is through sigma \(\sigma\), which identifies the distance at which the energy function crosses zero as the atoms move closer together than dmin. (See Section 19.6.1 for more details about the relationship between these).

\(\sigma\) turns out to be about 0.89*dmin, which is close enough to dmin that it makes it hard to distinguish the two. Be very careful that you use the correct value. In the example below, we will show you how to use the built-in OpenMM conversion constants to avoid errors.

Lennard-Jones parameters are defined for pairs of identical atoms, but must also be applied to pairs of dissimilar atoms. That is done by “combining rules” that differ among popular MD codes. Two of the most common are:

- Lorentz-Berthelot (used by AMBER, CHARMM):

- Jorgensen (used by OPLS):

where r = the effective van der Waals “size” parameter (minimum radius, minimum distance, or zero crossing (sigma)), and \(\epsilon\) = the effective van der Waals energy well depth parameter, for the dissimilar pair of atoms i and j.

OpenMM only implements Lorentz-Berthelot directly, but others can be implemented using the CustomNonbondedForce class. (See Section 20.4 for details.)

10.4.2.3. Opaque handle MyOpenMMData¶

In this example, the handle used by the interface to OpenMM is a pointer to a struct called MyOpenMMData. The pointer itself is opaque, meaning the calling program has no knowledge of what the layout of the object it points to is, or how to use it to directly interface with OpenMM. The calling program will simply pass this opaque handle from one interface routine to another.

There are many different ways to implement the handle. The code below shows just one example. A simulation requires three OpenMM objects (a System, a Context, and an Integrator) and so these must exist within the handle. If other objects were required for a simulation, you would just add them to your handle; there would be no change in the main program using the handle.

struct MyOpenMMData {

MyOpenMMData() : system(0), context(0), integrator(0) {}

~MyOpenMMData() {delete system; delete context; delete integrator;}

OpenMM::System* system;

OpenMM::Context* context;

OpenMM::Integrator* integrator;

};

In addition to establishing pointers to the required three OpenMM objects, MyOpenMMData has a constructor MyOpenMMData() that sets the pointers for the three OpenMM objects to zero and a destructor ~MyOpenMMData() that (in C++) gives the heap space back. This was done in-line in the HelloArgon program, but we recommend you use something like the method here instead.

10.4.2.4. myInitializeOpenMM¶

The myInitializeOpenMM function takes the data structures and simulation parameters from the existing MD code and returns a new handle that can be used to do efficient computations with OpenMM. It also returns the platformName so the calling program knows what platform (e.g., CUDA, OpenCL, Reference) was used.

static MyOpenMMData*

myInitializeOpenMM( const MyAtomInfo atoms[],

double temperature,

double frictionInPs,

double solventDielectric,

double soluteDielectric,

double stepSizeInFs,

std::string& platformName)

This initialization routine is very similar to the HelloArgon example program, except that objects are created and put in the handle. For instance, just as in the HelloArgon program, the first step is to load the OpenMM plug-ins, so that the program will run on the best performing platform that is available. Then, a System is created and assigned to the handle omm. Similarly, forces are added to the System which is already in the handle.

// Load all available OpenMM plugins from their default location.

OpenMM::Platform::loadPluginsFromDirectory

(OpenMM::Platform::getDefaultPluginsDirectory());

// Allocate space to hold OpenMM objects while we're using them.

MyOpenMMData* omm = new MyOpenMMData();

// Create a System and Force objects within the System. Retain a reference

// to each force object so we can fill in the forces. Note: the OpenMM

// System takes ownership of the force objects;don't delete them yourself.

omm->system = new OpenMM::System();

OpenMM::NonbondedForce* nonbond = new OpenMM::NonbondedForce();

OpenMM::GBSAOBCForce* gbsa = new OpenMM::GBSAOBCForce();

omm->system->addForce(nonbond);

omm->system->addForce(gbsa);

// Specify dielectrics for GBSA implicit solvation.

gbsa->setSolventDielectric(solventDielectric);

gbsa->setSoluteDielectric(soluteDielectric);

In the next step, atoms are added to the System within the handle, with information about each atom coming from the data structure that was passed into the initialization function from the existing MD code. As shown in the HelloArgon program, both the System and the forces need information about the atoms. For those unfamiliar with the C++ Standard Template Library, the push_back function called at the end of this code snippet just adds the given argument to the end of a C++ “vector” container.

// Specify the atoms and their properties:

// (1) System needs to know the masses.

// (2) NonbondedForce needs charges,van der Waals properties(in MD units!).

// (3) GBSA needs charge, radius, and scale factor.

// (4) Collect default positions for initializing the simulation later.

std::vector<Vec3> initialPosInNm;

for (int n=0; *atoms[n].pdb; ++n) {

const MyAtomInfo& atom = atoms[n];

omm->system->addParticle(atom.mass);

nonbond->addParticle(atom.charge,

atom.vdwRadiusInAng * OpenMM::NmPerAngstrom

* OpenMM::SigmaPerVdwRadius,

atom.vdwEnergyInKcal * OpenMM::KJPerKcal);

gbsa->addParticle(atom.charge,

atom.gbsaRadiusInAng * OpenMM::NmPerAngstrom,

atom.gbsaScaleFactor);

// Convert the initial position to nm and append to the array.

const Vec3 posInNm(atom.initPosInAng[0] * OpenMM::NmPerAngstrom,

atom.initPosInAng[1] * OpenMM::NmPerAngstrom,

atom.initPosInAng[2] * OpenMM::NmPerAngstrom);

initialPosInNm.push_back(posInNm);

Units: Here we emphasize the need to pay special attention to the units. As mentioned earlier, the existing MD code in this example uses units of angstroms and kcals, but OpenMM uses nanometers and kilojoules. So the initialization routine will need to convert the values from the existing MD code into the OpenMM units before assigning them to the OpenMM objects.

In the code above, we have used the unit conversion constants that come with OpenMM (e.g., OpenMM::NmPerAngstrom) to perform these conversions. Combined with the naming convention of including the units in the variable name (e.g., initPosInAng), the unit conversion constants are useful reminders to pay attention to units and minimize errors.

Finally, the initialization routine creates the Integrator and Context for the simulation. Again, note the change in units for the arguments! The routine then gets the platform that will be used to run the simulation and returns that, along with the handle omm, back to the calling function.

// Choose an Integrator for advancing time, and a Context connecting the

// System with the Integrator for simulation. Let the Context choose the

// best available Platform. Initialize the configuration from the default

// positions we collected above. Initial velocities will be zero but could

// have been set here.

omm->integrator = new OpenMM::LangevinIntegrator(temperature,

frictionInPs,

stepSizeInFs * OpenMM::PsPerFs);

omm->context = new OpenMM::Context(*omm->system, *omm->integrator);

omm->context->setPositions(initialPosInNm);

platformName = omm->context->getPlatform().getName();

return omm;

10.4.2.5. myGetOpenMMState¶

The myGetOpenMMState function takes the handle and returns the time, energy, and data structure for the atoms in a way that the existing MD code can use them without modification.

static void

myGetOpenMMState(MyOpenMMData* omm, bool wantEnergy,

double& timeInPs, double& energyInKcal, MyAtomInfo atoms[])

Again, this is another interface routine in which you need to be very careful of your units! Note the conversion from the OpenMM units back to the units used in the existing MD code.

int infoMask = 0;

infoMask = OpenMM::State::Positions;

if (wantEnergy) {

infoMask += OpenMM::State::Velocities; // for kinetic energy (cheap)

infoMask += OpenMM::State::Energy; // for pot. energy (more expensive)

}

// Forces are also available (and cheap).

const OpenMM::State state = omm->context->getState(infoMask);

timeInPs = state.getTime(); // OpenMM time is in ps already

// Copy OpenMM positions into atoms array and change units from nm to Angstroms.

const std::vector<Vec3>& positionsInNm = state.getPositions();

for (int i=0; i < (int)positionsInNm.size(); ++i)

for (int j=0; j < 3; ++j)

atoms[i].posInAng[j] = positionsInNm[i][j] * OpenMM::AngstromsPerNm;

// If energy has been requested, obtain it and convert from kJ to kcal.

energyInKcal = 0;

if (wantEnergy)

energyInKcal = (state.getPotentialEnergy() + state.getKineticEnergy())

* OpenMM::KcalPerKJ;

10.4.2.6. myStepWithOpenMM¶

The myStepWithOpenMM routine takes the handle, uses it to find the Integrator, and then sets the number of steps for the Integrator to take. It does not return any values.

static void

myStepWithOpenMM(MyOpenMMData* omm, int numSteps) {

omm->integrator->step(numSteps);

}

10.4.2.7. myTerminateOpenMM¶

The myTerminateOpenMM routine takes the handle and deletes all the components, e.g., the Context and System, cleaning up the heap space.

static void

myTerminateOpenMM(MyOpenMMData* omm) {

delete omm;

}

10.5. HelloEthane Program¶

The HelloEthane program simulates ethane (H3-C-C-H3) in a vacuum. It is structured similarly to the HelloSodiumChloride example, but includes bonded forces (bond stretch, bond angle bend, dihedral torsion). In setting up these bonded forces, the program illustrates some of the other inconsistencies in definitions and units that you should watch out for.

The bonded forces are added to the system within the initialization interface routine, similar to how the non-bonded forces were added in the HelloSodiumChloride example:

// Create a System and Force objects within the System. Retain a reference

// to each force object so we can fill in the forces. Note: the System owns

// the force objects and will take care of deleting them; don't do it yourself!

OpenMM::System& system = *(omm->system = new OpenMM::System());

OpenMM::NonbondedForce& nonbond = *new OpenMM::NonbondedForce();

OpenMM::HarmonicBondForce& bondStretch = *new OpenMM::HarmonicBondForce();

OpenMM::HarmonicAngleForce& bondBend = *new OpenMM::HarmonicAngleForce();

OpenMM::PeriodicTorsionForce& bondTorsion = *new OpenMM::PeriodicTorsionForce();

system.addForce(&nonbond);

system.addForce(&bondStretch);

system.addForce(&bondBend);

system.addForce(&bondTorsion);

Constrainable and non-constrainable bonds: In the initialization routine, we also set up the bonds. If constraints are being used, then we tell the System about the constrainable bonds:

std::vector< std::pair<int,int> > bondPairs;

for (int i=0; bonds[i].type != EndOfList; ++i) {

const int* atom = bonds[i].atoms;

const BondType& bond = bondType[bonds[i].type];

if (UseConstraints && bond.canConstrain) {

system.addConstraint(atom[0], atom[1],

bond.nominalLengthInAngstroms * OpenMM::NmPerAngstrom);

}

Otherwise, we need to give the HarmonicBondForce the bond stretch parameters.

Warning: The constant used to specify the stiffness may be defined differently between the existing MD code and OpenMM. For instance, AMBER uses the constant, as given in the harmonic energy term kx2, where the force is 2kx (k = constant and x = distance). OpenMM wants the constant, as used in the force term kx (with energy 0.5 * kx2). So a factor of 2 must be introduced when setting the bond stretch parameters in an OpenMM system using data from an AMBER system.

bondStretch.addBond(atom[0], atom[1], bond.nominalLengthInAngstroms * OpenMM::NmPerAngstrom,

bond.stiffnessInKcalPerAngstrom2 * 2 * OpenMM::KJPerKcal *

OpenMM::AngstromsPerNm * OpenMM::AngstromsPerNm);

Non-bond exclusions: Next, we deal with non-bond exclusions. These are used for pairs of atoms that appear close to one another in the network of bonds in a molecule. For atoms that close, normal non-bonded forces do not apply or are reduced in magnitude. First, we create a list of bonds to generate the non- bond exclusions:

bondPairs.push_back(std::make_pair(atom[0], atom[1]));

OpenMM’s non-bonded force provides a convenient routine for creating the common exceptions. These are: (1) for atoms connected by one bond (1-2) or connected by just one additional bond (1-3), Coulomb and van der Waals terms do not apply; and (2) for atoms connected by three bonds (1-4), Coulomb and van der Waals terms apply but are reduced by a force-field dependent scale factor. In general, you may introduce additional exceptions, but the standard ones suffice here and in many other circumstances.

// Exclude 1-2, 1-3 bonded atoms from nonbonded forces, and scale down 1-4 bonded atoms.

nonbond.createExceptionsFromBonds(bondPairs, Coulomb14Scale, LennardJones14Scale);

// Create the 1-2-3 bond angle harmonic terms.

for (int i=0; angles[i].type != EndOfList; ++i) {

const int* atom = angles[i].atoms;

const AngleType& angle = angleType[angles[i].type];

// See note under bond stretch above regarding the factor of 2 here.

bondBend.addAngle(atom[0],atom[1],atom[2],

angle.nominalAngleInDegrees * OpenMM::RadiansPerDegree,

angle.stiffnessInKcalPerRadian2 * 2 *

OpenMM::KJPerKcal);

}

// Create the 1-2-3-4 bond torsion (dihedral) terms.

for (int i=0; torsions[i].type != EndOfList; ++i) {

const int* atom = torsions[i].atoms;

const TorsionType& torsion = torsionType[torsions[i].type];

bondTorsion.addTorsion(atom[0],atom[1],atom[2],atom[3],

torsion.periodicity,

torsion.phaseInDegrees * OpenMM::RadiansPerDegree,

torsion.amplitudeInKcal * OpenMM::KJPerKcal);

}

The rest of the code is similar to the HelloSodiumChloride example and will not be covered in detail here. Please refer to the program HelloEthane.cpp itself, which is well-commented, for additional details.

11. Platform-Specific Properties¶

When creating a Context, you can specify values for properties specific to a particular Platform. This is used to control how calculations are done in ways that are outside the scope of the generic OpenMM API.

To do this, pass both the Platform object and a map of property values to the Context constructor:

Platform& platform = Platform::getPlatformByName("OpenCL");

map<string, string> properties;

properties["OpenCLDeviceIndex"] = "1";

Context context(system, integrator, platform, properties);

After a Context is created, you can use the Platform’s getPropertyValue() method to query the values of properties.

11.1. OpenCL Platform¶

The OpenCL Platform recognizes the following Platform-specific properties:

- OpenCLPrecision: This selects what numeric precision to use for calculations. The allowed values are “single”, “mixed”, and “double”. If it is set to “single”, nearly all calculations are done in single precision. This is the fastest option but also the least accurate. If it is set to “mixed”, forces are computed in single precision but integration is done in double precision. This gives much better energy conservation with only a slight decrease in speed. If it is set to “double”, all calculations are done in double precision. This is the most accurate option, but is usually much slower than the others.

- OpenCLUseCpuPme: This selects whether to use the CPU-based PME implementation. The allowed values are “true” or “false”. Depending on your hardware, this might (or might not) improve performance. To use this option, you must have FFTW (single precision, multithreaded) installed, and your CPU must support SSE 4.1.

- OpenCLPlatformIndex: When multiple OpenCL implementations are installed on your computer, this is used to select which one to use. The value is the zero-based index of the platform (in the OpenCL sense, not the OpenMM sense) to use, in the order they are returned by the OpenCL platform API. This is useful, for example, in selecting whether to use a GPU or CPU based OpenCL implementation.

- OpenCLDeviceIndex: When multiple OpenCL devices are available on your computer, this is used to select which one to use. The value is the zero-based index of the device to use, in the order they are returned by the OpenCL device API.

The OpenCL Platform also supports parallelizing a simulation across multiple GPUs. To do that, set the OpenCLDeviceIndex property to a comma separated list of values. For example,

properties["OpenCLDeviceIndex"] = "0,1";

This tells it to use both devices 0 and 1, splitting the work between them.

11.2. CUDA Platform¶

The CUDA Platform recognizes the following Platform-specific properties:

- CudaPrecision: This selects what numeric precision to use for calculations. The allowed values are “single”, “mixed”, and “double”. If it is set to “single”, nearly all calculations are done in single precision. This is the fastest option but also the least accurate. If it is set to “mixed”, forces are computed in single precision but integration is done in double precision. This gives much better energy conservation with only a slight decrease in speed. If it is set to “double”, all calculations are done in double precision. This is the most accurate option, but is usually much slower than the others.

- CudaUseCpuPme: This selects whether to use the CPU-based PME implementation. The allowed values are “true” or “false”. Depending on your hardware, this might (or might not) improve performance. To use this option, you must have FFTW (single precision, multithreaded) installed, and your CPU must support SSE 4.1.

- CudaCompiler: This specifies the path to the CUDA kernel compiler. If you do

not specify this, OpenMM will try to locate the compiler itself. Specify this

only when you want to override the default location. The logic used to pick the

default location depends on the operating system:

- Mac/Linux: It first looks for an environment variable called OPENMM_CUDA_COMPILER. If that is set, its value is used. Otherwise, the default location is set to /usr/local/cuda/bin/nvcc.

- Windows: It looks for an environment variable called CUDA_BIN_PATH, then appends nvcc.exe to it. That environment variable is set by the CUDA installer, so it usually is present.

- CudaTempDirectory: This specifies a directory where temporary files can be written while compiling kernels. OpenMM usually can locate your operating system’s temp directory automatically (for example, by looking for the TEMP environment variable), so you rarely need to specify this.

- CudaDeviceIndex: When multiple CUDA devices are available on your computer, this is used to select which one to use. The value is the zero-based index of the device to use, in the order they are returned by the CUDA API.

- CudaUseBlockingSync: This is used to control how the CUDA runtime synchronizes between the CPU and GPU. If this is set to “true” (the default), CUDA will allow the calling thread to sleep while the GPU is performing a computation, allowing the CPU to do other work. If it is set to “false”, CUDA will spin-lock while the GPU is working. Setting it to “false” can improve performance slightly, but also prevents the CPU from doing anything else while the GPU is working.

The CUDA Platform also supports parallelizing a simulation across multiple GPUs. To do that, set the CudaDeviceIndex property to a comma separated list of values. For example,

properties["CudaDeviceIndex"] = "0,1";

This tells it to use both devices 0 and 1, splitting the work between them.

11.3. CPU Platform¶

The CPU Platform recognizes the following Platform-specific properties:

CpuThreads: This specifies the number of CPU threads to use. If you do not specify this, OpenMM will select a default number of threads as follows:

- If an environment variable called OPENMM_CPU_THREADS is set, its value is used as the number of threads.

- Otherwise, the number of threads is set to the number of logical CPU cores in the computer it is running on.

Usually the default value works well. This is mainly useful when you are running something else on the computer at the same time, and you want to prevent OpenMM from monopolizing all available cores.

12. Using OpenMM with Software Written in Languages Other than C++¶

Although the native OpenMM API is object-oriented C++ code, it is possible to directly translate the interface so that it is callable from C, Fortran 95, and Python with no substantial conceptual changes. We have developed a straightforward mapping for these languages that, while perhaps not the most elegant possible, has several advantages:

- Almost all documentation, training, forum discussions, and so on are equally useful to users of all these languages. There are syntactic differences of course, but all the important concepts remain unchanged.

- We are able to generate the C, Fortran, and Python APIs from the C++ API. Obviously, this reduces development effort, but more importantly it means that the APIs are likely to be error-free and are always available immediately when the native API is updated.

- Because OpenMM performs expensive operations “in bulk” there is no noticeable overhead in accessing these operations through the C, Fortran, or Python APIs.

- All symbols introduced to a C or Fortran program begin with the prefix “OpenMM_” so will not interfere with symbols already in use.

Availability of APIs in other languages: All necessary C and Fortran bindings are built in to the main OpenMM library; no separate library is required. The Python wrappers are contained in a module that is distributed with OpenMM and that can be installed by executing its setup.py script in the standard way.

(This doesn’t apply to most users: if you are building your own OpenMM from source using CMake and want the API bindings generated, be sure to enable the OPENMM_BUILD_C_AND_FORTRAN_WRAPPERS option for C and Fortran, or OPENMM_BUILD_PYTHON_WRAPPERS option for Python. The Python module will be placed in a subdirectory of your main build directory called “python”)

Documentation for APIs in other languages: While there is extensive Doxygen documentation available for the C++ and Python APIs, there is no separate on-line documentation for the C and Fortran API. Instead, you should use the C++ documentation, employing the mappings described here to figure out the equivalent syntax in C or Fortran.

12.1. C API¶

Before you start writing your own C program that calls OpenMM, be sure you can build and run the two C examples that are supplied with OpenMM (see Chapter 10). These can be built from the supplied Makefile on Linux and Mac, or supplied NMakefile and Visual Studio solution files on Windows.

The example programs are HelloArgonInC and HelloSodiumChlorideInC. The argon example serves as a quick check that your installation is set up properly and you know how to build a C program that is linked with OpenMM. It will also tell you whether OpenMM is executing on the GPU or is running (slowly) on the Reference platform. However, the argon example is not a good template to follow for your own programs. The sodium chloride example, though necessarily simplified, is structured roughly in the way we recommended you set up your own programs to call OpenMM. Please be sure you have both of these programs executing successfully on your machine before continuing.

12.1.1. Mechanics of using the C API¶